The Problem

Why Software Doesn't Fix a Data Problem

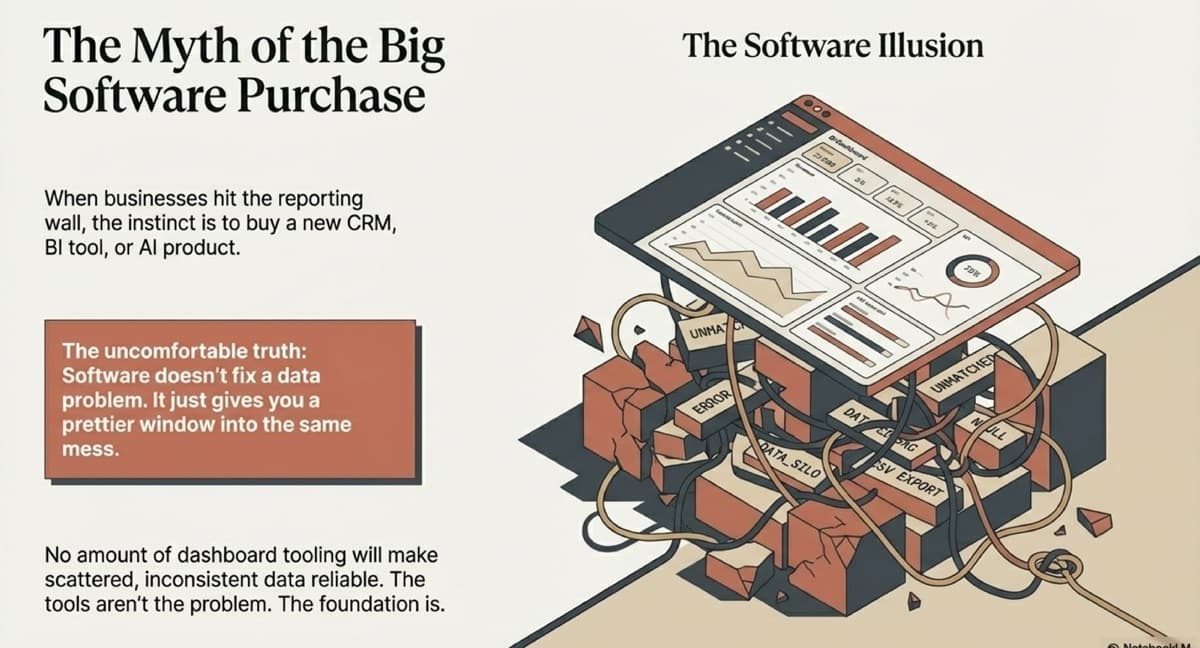

The uncomfortable truth about the big software purchase.

When businesses hit the reporting wall — when the numbers stop making sense, when the board pack takes a week to assemble, when nobody trusts the dashboards — the instinct is to buy something. A new CRM. A better BI tool. An AI product that promises to surface insights automatically. It feels like progress. It is almost always a mistake.

Software does not fix a data problem. It just gives you a prettier window into the same mess.

The Software Illusion

Modern SaaS products are extraordinarily good at demos. They show you beautiful dashboards, drag-and-drop report builders, AI-powered anomaly detection. What they cannot show you is what happens when the data feeding those dashboards is inconsistent, duplicated, or missing. Harvard Business Review found that only 3% of companies' data meets basic quality standards1. A dashboard built on messy data does not give you insights. It gives you a false sense of confidence — which is worse than having no dashboard at all, because now you are making decisions based on numbers that look authoritative but are fundamentally unreliable.

3%

of companies' data meets basic quality standards

Harvard Business Review, 2017

Why Businesses Keep Making This Mistake

Software purchases are tangible and satisfying. You can point to a vendor, a contract, a go-live date. They create the feeling of progress. Data foundations, by contrast, are invisible when done well. Nobody celebrates the data pipeline that ran perfectly at 3am. Nobody puts "we normalised 47 transaction types into a unified schema" on a press release. But the pipeline is what makes everything else work. The schema is what makes every future capability possible.

The Compounding Cost

Every software purchase made on top of broken data creates a new integration problem. The CRM does not match the accounting system. The BI tool pulls from the CRM but not the ERP. The AI product needs clean, structured data that does not exist yet. Gartner research shows that poor data quality costs organisations an average of $12.9 million per year2. Each new tool adds another silo, another set of definitions, another reconciliation headache. The technology stack grows, but the clarity does not. Cost goes up. Confidence goes down.

What to Do Instead

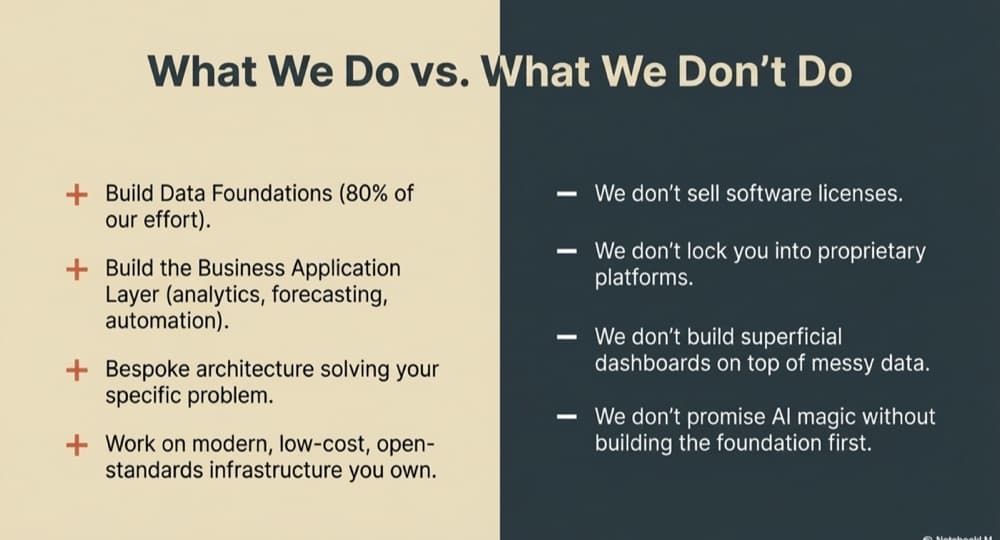

Before any software investment, ask one question: is the data underneath this tool structured, connected, and reliable? If the answer is no, the software will underperform — not because the software is bad, but because the foundation is missing. The most capital-efficient investment a growing business can make is not buying software or hiring a data scientist. It is structuring the data properly. Once the foundation exists, every tool you plug in works better, implements faster, and costs less to maintain.

The Right Sequence

Connect your systems. Normalise your data. Store it in a modern, open-standards database you own. Then — and only then — layer on the dashboards, the analytics, the AI. This sequence is not optional. It is the difference between a technology investment that compounds in value and one that compounds in complexity.

Sources

- Harvard Business Review, "Only 3% of Companies' Data Meets Basic Quality Standards" (2017)

- Gartner, "How to Improve Your Data Quality" (2021)

Related Capabilities

Data Strategy & Architecture

Before any technology investment, the architecture and governance must be right. We define data strategy aligned to commercial objectives — the roadmap, standards, and operating model that turn raw data into a durable competitive asset.

Business Intelligence & Decision Systems

Executives need clarity, not dashboards for their own sake. We build reporting and decision systems anchored to your commercial priorities — replacing manual processes with real-time intelligence leadership can act on.

Continue Reading

The Foundation

What Is a Data Foundation (And Why It Matters)

A data foundation is the structured, connected data layer that powers every reliable decision, dashboard, and AI capability in your business. Learn what it is and why it is the most important investment you can make.

Read →The Problem

What Messy Data Actually Costs Your Business

Messy data creates slow decisions, wasted hours, invisible risk, and missed opportunities. Learn the real cost of scattered, inconsistent data across your business systems.

Read →The Foundation

Data Foundations vs. The Big Software Purchase

When reporting breaks, the instinct is to buy new software. Here is why investing in your data foundation first is more capital-efficient and delivers better outcomes than any tool purchase.

Read →Ready to build your data foundation?

Let's have a conversation about where you are and whether data foundations are the right investment right now.

Start a Conversation